The worst kind of legacy code

A supply chain software maintenance project story from 2006 2020-02-25 #maintenance

I used to work for a small software consultancy in Rotterdam, where we built custom systems for a variety of industries. Some of us had supply chain management domain expertise, built up over the course of a decade maintaining a warehouse management system.

Getting into a complex domain

In 2006, my employer acquired a company for its IP and contacts, to subsequently sell a development project to a government customer. The acquisition included shipment management software, which dealt with things like international shipments and shipping manifest data. I would call supply chain visibility an interesting domain to work in, because it involves highly optimised business processes, run by lots of companies who don’t trust each other.

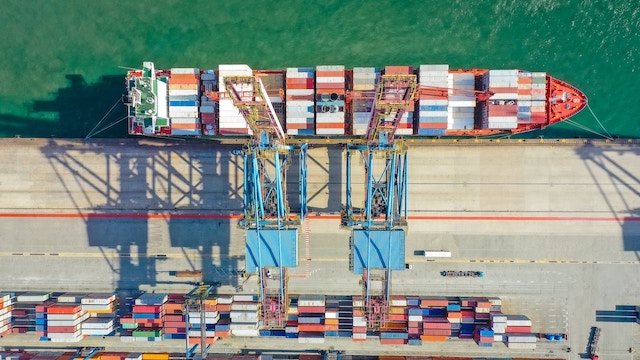

The challenge that the photo (above) shows does not lie in the number of shipping containers: large container ships carry thousands of containers, not millions. Supply chain visibility addresses problems like not knowing what each shipping container contains, or where to find the shipping container that contains a particular customer order’s packages. In theory, each container’s shipping manifest lists its contents. In practice, supply chain participants struggle to get access to accurate aggregated shipping manifest data. This system did not manage big data; it managed bad data.

The wrong kind of legacy

Given the complex domain, we expected complex code. We got more than merely complex code: we got complex legacy code, which in this case meant code written by someone else. While I had experience with the varying quality of other people’s code, this codebase consisted of the worst 100,000 lines of code I had ever seen.

The system documentation posed a more serious issue: we didn’t get any. We also had no access to the anyone on the original development team. We had no choice but to read the code, which didn’t even have comments.

We reverse-engineered our understanding of the system by following the code from its entry points, via the user interface to the back-end. At this point, any documentation at all would have helped. On this project I learned the hard way that relying on the code stops seeming like such a good idea when you come across large sections of apparently unused code, and sections of code that clearly don’t work.

Writing off maintainability

After achieving minimum viable understanding of the code, we committed to making modifications and delivering a production release to our customer six months later. By this point, we’d decided that we would only deliver this system once, and that it would cost so much to make the code maintainable that we shouldn’t attempt to start documenting it. During the project, we only wrote some brief high-level technical architecture documentation to give to the customer, along with detailed installation instructions.

As it happens, I don’t know what happened next. The customer planned to install the software on a secure government network that we shouldn’t have heard of - not the public Internet - and we would have needed a proper security clearance to find out whether our customer ever actually deployed to a production environment. Ironically, and fortunately, we therefore had no obligation to maintain the unmaintainable.